Hello. Need suggestion on how to force Syncthing to sync faster. I use a server (the machine which only received data) with 16 HDD which sync data from 20 remote clients. Server has 10 gbps network, modern CPU. Clients (the machines which only send data) using different OS and different country location. Some clients located within same datacenter. Most clients uses 1 Gbps network. Most clients connected directly WAN, only TCP connection used by Syncthing. No applications running on server, only Syncthing and SSH server (near idle). Last stable version of Go and Syncthing is used. All clients are using same Syncthing version. (Note for now i am using Syncthing only as Sync whole data solution, i disabled file monitor and set full scan interval to 3 months because i can not even finish initial sync, it is extremely slow)

Problem: Network speed sync is very slow. I can not even finish initial sync already for about a month. It is often to see 0 bps / sec. Sometimes it is 4 Mbps. Sometimes 20 mbps. Rarely i see 100 mbps, 200 mbps, 300 mbps, very very rare for a few seconds.

Network channel is 10 Gbps shared port. Iperf shows: 7 Gbits/sec one connection. Hard drives not overloaded. All drives are Enterprise datacenter HDD SATA III (6gbps). Near each client uses its own HDD inside Syncthing server (HDD is dedicated for a client) (so it is not possible one client stops sync for all clients, it should be run in parallel load in terms of HDD) CPU is not overloaded. 32 CPU cores is used for Syncthing (and system processes). RAM is loaded up to defined limit. GOMEMLIMIT=10737418240 GOGC=off

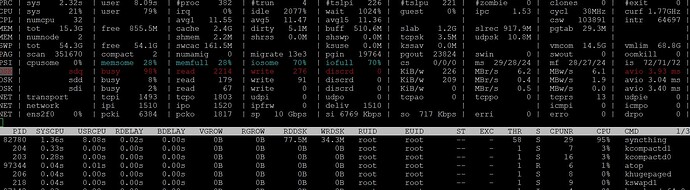

CPU and RAM profiling attached.

The only limitation is RAM. It is set to 10240 MB and GC runs to match this limit.

Syncthing index DB size: 29G index-v0.14.0.db

Total local data in Syncthing server: 16 357 304 folders 3 451 993 files ~2,42 TiB size

Estimate total amount which needs to be now on server for the all clients after initial sync: 50M folders, 100M files, 100 TB data.

Currently connected clients: 4 (others disabled to speed up sync of these 4 clients)

Problem should not be in clients because everything possible is tuned in clients, clients are different, it is not possible for every single client to have problems regardless of OS, country and so on.

Settings as follows:

<configuration version="37">

<folder EACH SAME SETTINGS>

<filesystemType>basic</filesystemType>

<minDiskFree unit="GB">0</minDiskFree>

<versioning>

<cleanupIntervalS>3600</cleanupIntervalS>

<fsPath></fsPath>

<fsType>basic</fsType>

</versioning>

<copiers>0</copiers>

<pullerMaxPendingKiB>0</pullerMaxPendingKiB>

<hashers>0</hashers>

<order>alphabetic</order>

<ignoreDelete>false</ignoreDelete>

<scanProgressIntervalS>-1</scanProgressIntervalS>

<pullerPauseS>0</pullerPauseS>

<maxConflicts>10</maxConflicts>

<disableSparseFiles>true</disableSparseFiles>

<disableTempIndexes>true</disableTempIndexes>

<paused>false</paused>

<weakHashThresholdPct>25</weakHashThresholdPct>

<markerName>.stfolder</markerName>

<copyOwnershipFromParent>false</copyOwnershipFromParent>

<modTimeWindowS>5</modTimeWindowS>

<maxConcurrentWrites>2</maxConcurrentWrites>

<disableFsync>true</disableFsync>

<blockPullOrder>standard</blockPullOrder>

<copyRangeMethod>standard</copyRangeMethod>

<caseSensitiveFS>true</caseSensitiveFS>

<junctionsAsDirs>false</junctionsAsDirs>

<syncOwnership>false</syncOwnership>

<sendOwnership>false</sendOwnership>

<syncXattrs>false</syncXattrs>

<sendXattrs>false</sendXattrs>

<xattrFilter>

<maxSingleEntrySize>1024</maxSingleEntrySize>

<maxTotalSize>4096</maxTotalSize>

</xattrFilter>

</folder>

<device EACH SAME SETTINGS >

<address>dynamic</address>

<paused>false</paused>

<autoAcceptFolders>false</autoAcceptFolders>

<maxSendKbps>0</maxSendKbps>

<maxRecvKbps>0</maxRecvKbps>

<maxRequestKiB>0</maxRequestKiB>

<untrusted>false</untrusted>

<remoteGUIPort>0</remoteGUIPort>

</device>

<options>

<listenAddress>default</listenAddress>

<globalAnnounceServer>default</globalAnnounceServer>

<globalAnnounceEnabled>true</globalAnnounceEnabled>

<localAnnounceEnabled>false</localAnnounceEnabled>

<localAnnouncePort>21027</localAnnouncePort>

<localAnnounceMCAddr>[ff12::8384]:21027</localAnnounceMCAddr>

<maxSendKbps>0</maxSendKbps>

<maxRecvKbps>0</maxRecvKbps>

<reconnectionIntervalS>60</reconnectionIntervalS>

<relaysEnabled>false</relaysEnabled>

<relayReconnectIntervalM>10</relayReconnectIntervalM>

<startBrowser>false</startBrowser>

<natEnabled>false</natEnabled>

<natLeaseMinutes>60</natLeaseMinutes>

<natRenewalMinutes>30</natRenewalMinutes>

<natTimeoutSeconds>10</natTimeoutSeconds>

<urAccepted>-1</urAccepted>

<urSeen>3</urSeen>

<urUniqueID></urUniqueID>

<urURL>https://data.syncthing.net/newdata</urURL>

<urPostInsecurely>false</urPostInsecurely>

<urInitialDelayS>1800</urInitialDelayS>

<autoUpgradeIntervalH>0</autoUpgradeIntervalH>

<upgradeToPreReleases>false</upgradeToPreReleases>

<keepTemporariesH>24</keepTemporariesH>

<cacheIgnoredFiles>false</cacheIgnoredFiles>

<progressUpdateIntervalS>-1</progressUpdateIntervalS>

<limitBandwidthInLan>false</limitBandwidthInLan>

<minHomeDiskFree unit="%">1</minHomeDiskFree>

<releasesURL>https://upgrades.syncthing.net/meta.json</releasesURL>

<overwriteRemoteDeviceNamesOnConnect>false</overwriteRemoteDeviceNamesOnConnect>

<tempIndexMinBlocks>10</tempIndexMinBlocks>

<trafficClass>0</trafficClass>

<setLowPriority>true</setLowPriority>

<maxFolderConcurrency>32</maxFolderConcurrency>

<crashReportingURL>https://crash.syncthing.net/newcrash</crashReportingURL>

<crashReportingEnabled>false</crashReportingEnabled>

<stunKeepaliveStartS>180</stunKeepaliveStartS>

<stunKeepaliveMinS>20</stunKeepaliveMinS>

<stunServer>default</stunServer>

<databaseTuning>auto</databaseTuning>

<maxConcurrentIncomingRequestKiB>0</maxConcurrentIncomingRequestKiB>

<announceLANAddresses>false</announceLANAddresses>

<sendFullIndexOnUpgrade>false</sendFullIndexOnUpgrade>

<connectionLimitEnough>0</connectionLimitEnough>

<connectionLimitMax>0</connectionLimitMax>

<insecureAllowOldTLSVersions>false</insecureAllowOldTLSVersions>

</options>

</configuration>

Known overloads: Index database is HDD overloaded. Index file index-v0.14.0.db is located on separate disk which is dedicated for index (not used for anything else). However it does not help for it to work good. atop screenshot attached.

Need help to:

- Increase network sync speed to the NIC level limits (or at least 100 mbps per client which is a reasonable start value).

- Offload Syncthing index database for Syncthing not to be HDD bound

syncthing-heap-linux-amd64-v1.23.4-205409.pprof (550.4 KB) syncthing-cpu-linux-amd64-v1.23.4-205335.pprof (37.9 KB)