I have two Windows 11 virtual machines on Proxmox, each with Syncthing. I also run Syncthing as an app in TrueNAS Scale. The TrueNAS is also virtualized on Proxmox. All three are using the same Linux bridge as a virtual interface in Proxmox, all on the same subnetwork. One of the virtualized Windows machines connects via TCP WAN and is speedy enough. The other connects only by Relay WAN no matter what I’ve tried, and the connection is very slow. My ultimate goal would be to have both using TCP LAN if that’s possible, but the immediate goal is to have the Relay WAN connection changed to at least TCP WAN. As further background, I have changed the address of the machine on the Relay WAN to “tcp://ip address:22000, dynamic”, in the Syncthing GUI on both the Windows instance and on TrueNAS. The result is that it takes longer to establish the connection, but the connection is still Relay WAN. The firewall rules on the Windows 11 machines are the same for both. The incoming rules for the Syncthing application permit all ports for TCP and UDP. I assume that I don’t need separate ports in the TrueNAS instance of Syncthing for the listen address for the two Windows instances. I’ve been through the several posts in this forum that seem apt, but haven’t found any answers. I’ve tried other Syncthing settings that don’t work. Does anyone out there have suggestions for me?

To start with the easy things:

- are the VMs able to ping each other?

- could you check the logs for dial events?

Separate from the with/without relay discussion I wouldn’t get hung up on WAN vs LAN distinction. Basically I think if the connection destination isn’t the same subnet then syncthing considers it a WAN connection. In my case I’m running syncthing on a NAS which is on my LAN at 192.168.x.x in a docker container and forwarding the port. Internally the docker exposes a 172.x.x.x LAN and depending on whether the nas connects with other LAN devices first or other devices connect with with the NAS first, the nas shows destination IPs in 172.x.x.x or 192.168.x.x. So the lan or wan indication is really meaningless.

Mike, my second VM is now connecting with TCP LAN. Bug Reporter, the two VMs are able to ping each other with less than 1ms round trip time. Here are the logs from Syncthing on TrueNAS for start-up this morning:

2023-07-30 16:03:08 My ID: [ID OF SYNCTHING ON TRUENAS]

2023-07-30 16:03:09 Single thread SHA256 performance is 2499 MB/s using minio/sha256-simd (648 MB/s using crypto/sha256).

2023-07-30 16:03:09 Hashing performance is 1545.86 MB/s

2023-07-30 16:03:09 Overall send rate is unlimited, receive rate is unlimited

2023-07-30 16:03:09 Using discovery mechanism: global discovery server https://discovery.syncthing.net/v2/?noannounce&id=LYXKCHX-VI3NYZR-ALCJBHF-WMZYSPK-QG6QJA3-MPFYMSO-U56GTUK-NA2MIAW

2023-07-30 16:03:09 Using discovery mechanism: global discovery server https://discovery-v4.syncthing.net/v2/?nolookup&id=LYXKCHX-VI3NYZR-ALCJBHF-WMZYSPK-QG6QJA3-MPFYMSO-U56GTUK-NA2MIAW

2023-07-30 16:03:09 Using discovery mechanism: global discovery server https://discovery-v6.syncthing.net/v2/?nolookup&id=LYXKCHX-VI3NYZR-ALCJBHF-WMZYSPK-QG6QJA3-MPFYMSO-U56GTUK-NA2MIAW

2023-07-30 16:03:09 Using discovery mechanism: IPv4 local broadcast discovery on port 21027

2023-07-30 16:03:09 Using discovery mechanism: IPv6 local multicast discovery on address [ff12::8384]:21027

2023-07-30 16:03:09 Ready to synchronize “Quicken” (quicken) (sendreceive)

2023-07-30 16:03:09 …

2023-07-30 16:03:09 QUIC listener ([::]:22000) starting

2023-07-30 16:03:09 TCP listener ([::]:22000) starting

2023-07-30 16:03:09 Relay listener (dynamic+https://relays.syncthing.net/endpoint) starting

2023-07-30 16:03:09 GUI and API listening on [::]:8384

2023-07-30 16:03:09 Access the GUI via the following URL: https://127.0.0.1:8384/

2023-07-30 16:03:09 My name is “TrueNAS”

2023-07-30 16:03:09 Device [ID OF SYNCTHING ON WINDOWS VM CONNECTED BY TCP LAN] is “QuickWin” at [dynamic]

2023-07-30 16:03:09 Device [ID OF SYNCTHING ON WINDOWS VM CONNECTED BY RELAY WAN] is “SJM” at [tcp://192.168.10.20:22000 dynamic]

2023-07-30 16:03:09 Ready to synchronize “Stan’s Documents” (stans.documents) (sendreceive)

2023-07-30 16:03:09 Completed initial scan of sendreceive folder “Quicken” (quicken)

2023-07-30 16:03:10 Completed initial scan of sendreceive folder “Stan’s Documents” (stans.documents)

2023-07-30 16:03:19 quic://0.0.0.0:22000 detected NAT type: Symmetric NAT

2023-07-30 16:03:23 Detected 1 NAT service

2023-07-30 16:04:16 Joined relay relay://64.86.168.59:443

2023-07-30 16:04:43 Established secure connection to [ID OF SYNCTHING ON WINDOWS VM CONNECTED BY RELAY WAN] at 172.16.1.71:45386-64.86.168.59:443/relay-server/TLS1.3-TLS_AES_128_GCM_SHA256/WAN-P50

2023-07-30 16:04:43 Device [ID OF SYNCTHING ON WINDOWS VM CONNECTED BY RELAY WAN] client is “syncthing v1.23.6” named “SJM” at 172.16.1.71:45386-64.86.168.59:443/relay-server/TLS1.3-TLS_AES_128_GCM_SHA256/WAN-P50

2023-07-30 16:05:37 Established secure connection to [ID OF SYNCTHING ON WINDOWS VM CONNECTED BY TCP LAN] at 172.16.1.71:22000-192.168.10.48:22000/tcp-client/TLS1.3-TLS_AES_128_GCM_SHA256/LAN-P10

2023-07-30 16:05:37 Device [ID OF SYNCTHING ON WINDOWS VM CONNECTED BY TCP LAN] client is “syncthing v1.23.6” named “QuickWin” at 172.16.1.71:22000-192.168.10.48:22000/tcp-client/TLS1.3-TLS_AES_128_GCM_SHA256/LAN-P10

Gadget, I received your email but don’t know how to respond other than by posting here. You said: “Per Syncthing’s log on the TrueNAS Scale VM, it looks like “SJM” and “QuickWin” are both configured with the same host IP address (172.16.1.71)…” I don’t know how to interpret the last four entries from the logs, but the two Windows VMs are definitely not configured with the IP address of 172.16.1.71. I suspect that address is used somehow by Syncthing in connection with the relay servers. The machine that connects with TCP LAN is shown in the next to last entry as connected “at 172.16.1.71:22000-192.168.10.48:22000/tcp-client”. 192.168.10.48 is the address of that Windows VM. The machine that connects with RelayWAN is shown in the fourth from last entry as connected “at 172.16.1.71:45386-64.86.168.59:443/relay-server”.

Sorry for the confusion. I quickly realized I’d interpreted the log incorrectly (not keeping in mind it originated from TrueNAS), so I removed my post and had planned on posting a new one.

What I was going to suggest is that if all of the Syncthing connections are only between the VMs, then creating a second bridge in PVE (not linked to a physical network interface on the host), adding a second NIC to each VM, and then configuring Syncthing to bind only to that NIC on each VM.

It would be significantly faster, especially if the current bridge is linked to a physical port, which in turn is connected to a physical network switch.

It would also help ensure that syncing traverses across the bridge instead of intermittently going out to a Syncthing relay on the internet.

Alternatively, change the network setting for the Syncthing app in TrueNAS Scale to host networking so that Syncthing inside the container shares the IP address for TrueNAS. This way all three VMs are within the same LAN (in Syncthing’s eyes).

@Stan you don’t happen to use docker? That IP address looks like a container internal address.

Gadget, I tried your alternative and that worked. So, problem solved and thanks very much. If you have the patience, I’m curious about your first suggestion, the additional PVE bridge and will attempt that, just for the learning experience. How would I bind each Syncthing instance only a PVE bridge that is not linked to a physical network? Where in the Syncthing GUI would I enter the bridge? Advanced Configuration/Device/Addresses? And what would I enter without an IP address?

Bug Reporter, I have only shallow experience with Docker, which is used by TrueNAS for its applications. Until I thought about Gadget’s alternative solution, I hadn’t realized that the Syncthing app on TrueNAS was using an IP address different from TrueNAS. I appreciate the thought that you’ve put into my questions.

You’re welcome.

Sure, no problem.

(Didn’t want to force others who stumble across this post to have to scroll really far to read follow-up posts, so click the arrow below to expand. TL;DR. ![]() )

)

Syncthing doesn’t need to know anything about the PVE bridge.

A bridge in PVE is effectively a network switch, similar in basic functionality to the ones commonly found in home and corporate networks.

Your VMs (and Syncthing) don’t care and/or need to know about the bridge. All your VMs and Syncthing needs to know are the assigned IP addresses.

During installation, PVE automatically creates a default bridge labeled vmbr0 that’s linked to the primary NIC selected via the install wizard.

Via PVE’s web GUI (unless you want to try it from the CLI ![]() ) …

) …

(I’m currently running PVE 8.0.3, and my Firefox is set to dark mode, so my screenshots might not exactly match what you see, but it’ll be close enough.)

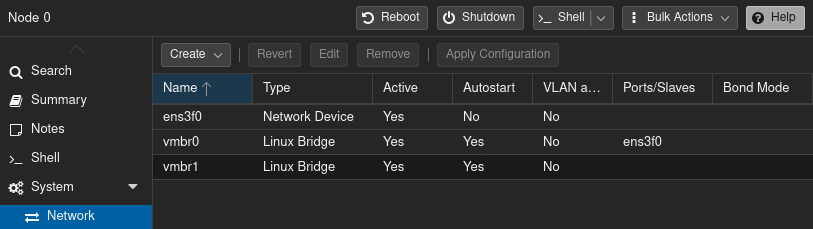

In the left navigation panel, click the host/node to change network settings for. Then in the center panel, under “System”, click “Network”. It’ll look something like this:

Note how I have two Linux bridge devices: vmbr0, vmbr1

The network device ens3f0 is my real NIC – it’s not guaranteed to be the same name on your system. It could be eth0, eno8403 or something else entirely different depending on your hardware configuration.

Linux bridge device vmbr0 is “bridged” to network device ens3f0. Think of it like a physical desktop switch that’s plugged into a network jack on a router/gateway.

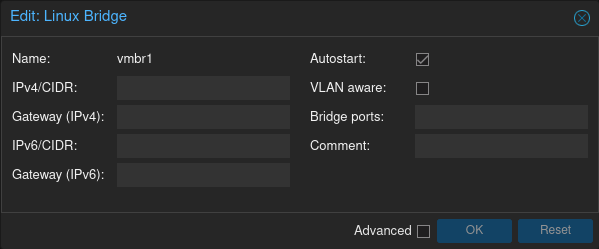

Linux bridge device vmbr1 is bridged to nothing. Selecting vmbr1 and clicking the [Edit] buttion pops up the following dialog box:

For the purposes of linking all of the VMs together on a private LAN, the “IPv4/CIDR”, “Gateway (IPv4)”, “IPv6/CIDR”, “Gateway (IPv6)” and “Bridge ports” fields are all optional. (Specifying a value for IPv4/IPv6 allows the host to reach the VMs across the bridge, i.e., the host would be part of the private LAN).

Click the [Apply Configuration] button. The new Linux bridge is immediately usable after applying the changes, no reboot required.

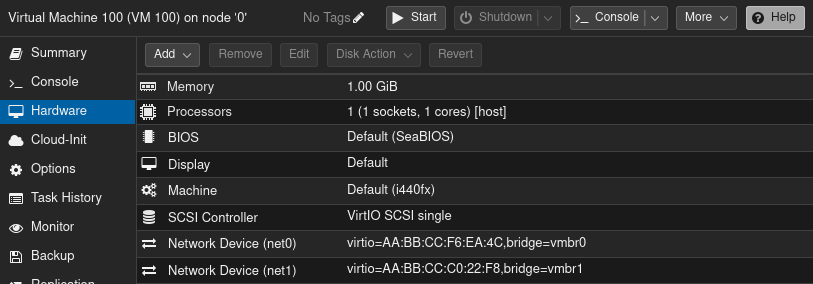

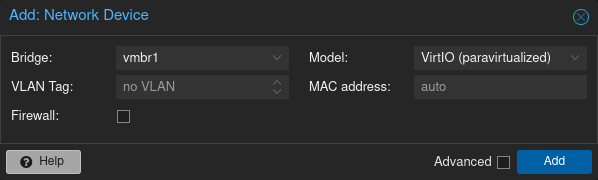

Next, reconfigure each VM that needs to be part of the new virtual LAN:

- Shut down a VM.

- Add a new network device for the VM.

- For the bridge, select

vmbr1. - Uncheck the “Firewall” option (there’s no need to protect it with PVE’s firewall since

vmbr1has no uplink).

Example VM configuration with two virtual NICs:

(Depending on the guest OS type that was selected, the default network device model might be “VirtIO (paravirtualized)” or “Intel E1000”. The former for Linux, the latter for Windows. For best performance and less load on the host, use the VirtIO drivers whenever possible. Pointers at the end of this post.)

From this point it’s all typical network setup in an OS:

- Boot the VM.

- Configure the new (virtual) NIC.

For a handful of VMs, it’s generally not worth the hassle to set up a DHCP server. Managing a small set of static IP addresses isn’t difficult.

Assign the new NIC any IP address you want, but it’s customary to use a private IP address range. Since most consumer routers are factory configured to use the 192.168.0.0/16 block (aka., “class C” network), I like to use 10.0.0.0/8 (“class A”) because there’s much less chance of a conflict with a home network.

10.10.10.11 is short, and easy to remember, so it’s my go-to choice for a host IP address.

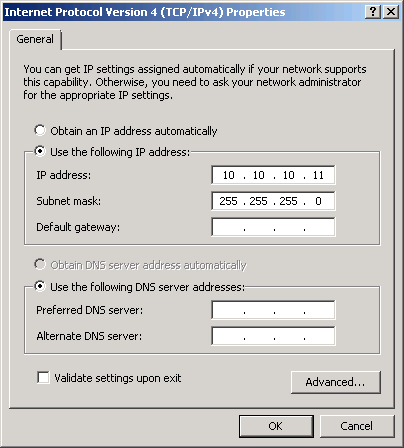

Unless there’s a need for more than 253 host IP addresses on the same Linux bridge, a netmask of 225.255.255.0 is fine. Summarizing:

- IP address:

10.10.10.11 - Subnet mask:

255.255.255.0 - Default gateway: n/a

- DNS: n/a

Example screenshot of the network adapter properties dialog box from Windows 7:

For each additional VM that’s added to the same vmbr1 Linux bridge, assign another unused IP address in the same range, e.g.:

- TrueNAS Scale:

10.10.10.11 - Windows 1:

10.10.10.12 - Windows 1:

10.10.10.13

Once that’s done, each VM will be multihomed.

By default, Syncthing will see the new NIC and automatically bind to it the next time it starts.

That might be perfectly fine, but to tell Syncthing to only use the new NIC connected to the private LAN for syncing, in Syncthing’s web GUI, click Actions → Settings → Connections and adjust the box labeled “Sync Protocol Listen Addresses”.

The default setting is default, which tells Syncthing to bind to all available network interfaces.

If the TrueNAS Scale VM has the IP address 10.10.10.11, then the listen address parameter would be tcp4://10.10.10.11:22000.

Rinse and repeat for the other VMs, i.e., tcp4://10.10.10.12:22000, tcp4://10.10.10.12:22000, etc.

(See Syncthing’s documentation about Listen Addresses for more details.)

Syncthing’s global discovery, NAT traversal and relaying can be disabled. Local discovery is enough for the VMs to find each other.

Running iperf3 tests, I get over 30 Gbit/s between VMs over a bridge that’s not connected to a physical port on a network switch.

PVE…

- If there aren’t two or more hosts in a cluster for live migrations, set the processor type to “host” so that the guest OS will optimize itself for best performance on the physical CPU.

- The VirtIO drivers offer the highest performance. Linux works out-of-the-box, but Windows requires more hand holding: https://fedorapeople.org/groups/virt/virtio-win/direct-downloads/stable-virtio/virtio-win.iso

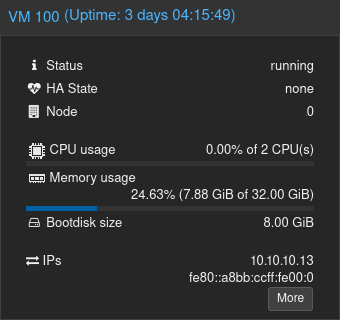

Bundled in the VirtIO drivers package is a tiny guest agent that relays useful info to the host such as the IP addresses in use:

The guest agent also provides hooks for the host to send graceful shutdown requests to a guest OS, etc.

Gadget: Wow! You obviously have a lot of patience. Thanks for such a thorough explanation. The three Proxmox VMs now use the same vmbr and can use a different vmbr not attached to a physical interface. In the near future, I will add a couple of non-virtualized Windows machines which will need access to Syncthing on TrueNAS through a switch. From what you’ve said, I assume that I can then add the TrueNAS ip:22000 as a second listen address on the TrueNAS Syncthing instance, and that would work. Thanks again for all of the effort you’ve put into this.

The best solution would be to run your docker container with network mode host but this seems to be not directly possible with TrueNAS.

The result of a quick Google search is that you might get similar results with a dedicated container IP and the option Provide access to node network namespace for the workload in the TrueNAS UI.

Yes, you could add the other virtual NIC on the TrueNAS VM to Syncthing – or revert Syncthing’s listen address back to default so that all network interfaces are used.

If you do that, keep in mind that there’s a good chance that the two Windows VMs will detect the TrueNAS on the physical LAN via the vmbr0 bridge and could use that network path instead of the private LAN on 10.0.0.0/8 provided by the vmbr1 bridge.

And because vmbr0 has access to the internet, it also opens up the possibility that Syncthing traffic ends up passing thru a Syncthing relay. Although with Syncthing on TrueNAS in host networking mode, it should be less likely.

To insure that the two Windows VMs always use the vmbr1 bridge, change the TrueNAS device address on each of the Windows VM Syncthing instances from dynamic to tcp://10.10.10.11:22000 (basically telling Syncthing exactly where to look for the TrueNAS).

This topic was automatically closed 30 days after the last reply. New replies are no longer allowed.