Hi,

before performing any action:

#

$folder = "n4q4z-ohpfz"

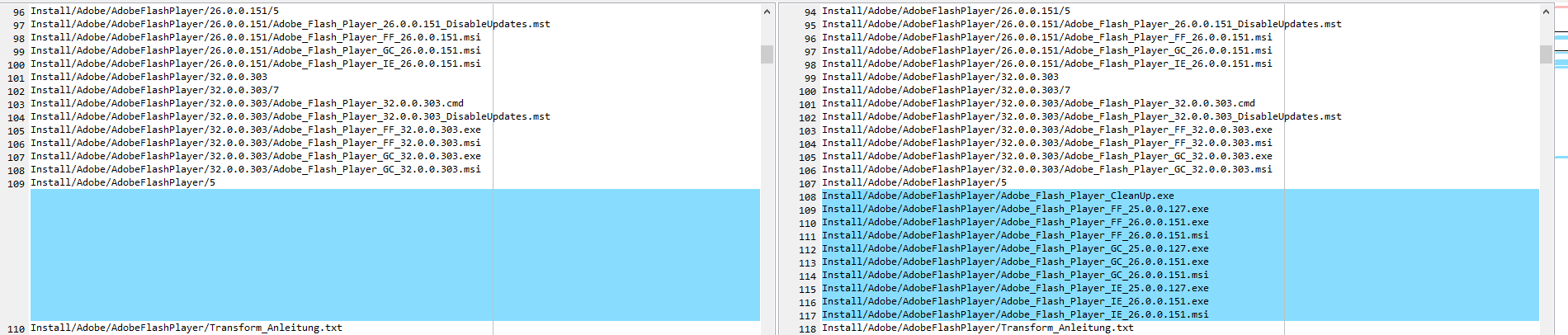

$file = "Adobe/AdobeFlashPlayer/Adobe_Flash_Player_CleanUp.exe"

#

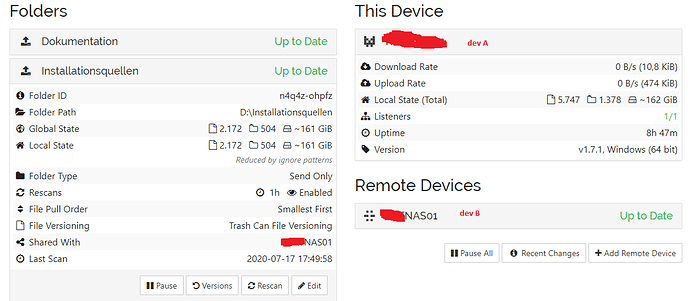

"*** devA ***"

c:\windows\curl.exe -s -X GET -H "X-API-Key: $apiKeyA" 'http://'$ipDevA'/rest/db/file?folder='$folder'&file='$file

#

""

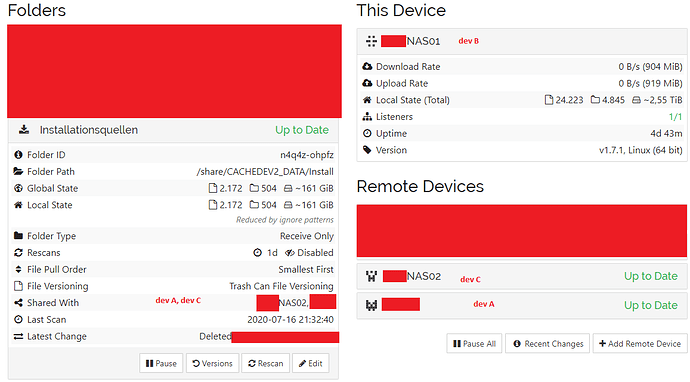

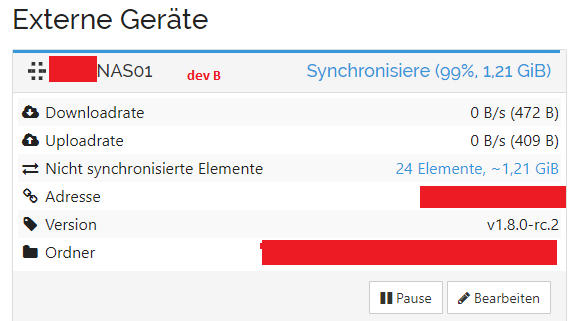

"*** devB ***"

c:\windows\curl.exe -s -X GET -H "X-API-Key: $apiKeyB" 'http://'$ipDevB'/rest/db/file?folder='$folder'&file='$file

#

""

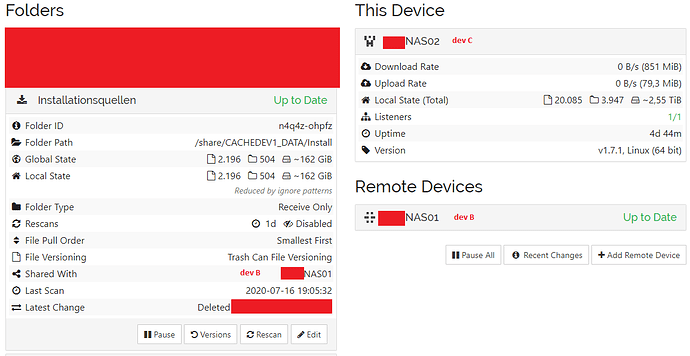

"*** devC*** "

c:\windows\curl.exe -s -X GET -H "X-API-Key: $apiKeyC" 'http://'$ipDevC'/rest/db/file?folder='$folder'&file='$file

*** devA ***

{

"availability": [

{

"id": "7E4EKDA-DEXQAEY-DIKXM2L-6XO7YWS-ARPGAW6-CRUSAI7-U2U5UMP-CCZKRAH",

"fromTemporary": false

}

],

"global": {

"deleted": true,

"ignored": false,

"invalid": false,

"localFlags": 0,

"modified": "2017-09-05T14:25:19.0109486+02:00",

"modifiedBy": "VBHJ3YR",

"mustRescan": false,

"name": "Adobe\\AdobeFlashPlayer\\Adobe_Flash_Player_CleanUp.exe",

"noPermissions": false,

"numBlocks": 0,

"permissions": "0",

"sequence": 15661,

"size": 0,

"type": "FILE",

"version": [

"LRORKSI:1",

"VBHJ3YR:2",

"VPYAMUE:1",

"ZTMOUGV:1",

"7E4EKDA:1"

]

},

"local": {

"deleted": true,

"ignored": false,

"invalid": false,

"localFlags": 0,

"modified": "2017-09-05T14:25:19.0109486+02:00",

"modifiedBy": "VBHJ3YR",

"mustRescan": false,

"name": "Adobe\\AdobeFlashPlayer\\Adobe_Flash_Player_CleanUp.exe",

"noPermissions": false,

"numBlocks": 0,

"permissions": "0",

"sequence": 15661,

"size": 0,

"type": "FILE",

"version": [

"LRORKSI:1",

"VBHJ3YR:2",

"VPYAMUE:1",

"ZTMOUGV:1",

"7E4EKDA:1"

]

}

}

*** devB ***

{

"availability": [

{

"id": "VBHJ3YR-WXRWUR4-GVZBFIL-4I2GE5T-TYHNPZY-NY3PKDS-Y2AFDBO-MZNP4AD",

"fromTemporary": false

},

{

"id": "VPYAMUE-D44JYED-5DNCNOV-QNNFESH-QLVRLP4-525HK3X-GK5RXN4-QS5LMQY",

"fromTemporary": false

}

],

"global": {

"deleted": true,

"ignored": false,

"invalid": false,

"localFlags": 0,

"modified": "2017-09-05T14:25:19.0109486+02:00",

"modifiedBy": "VBHJ3YR",

"mustRescan": false,

"name": "Adobe/AdobeFlashPlayer/Adobe_Flash_Player_CleanUp.exe",

"noPermissions": false,

"numBlocks": 0,

"permissions": "0",

"sequence": 15319,

"size": 0,

"type": "FILE",

"version": [

"LRORKSI:1",

"VBHJ3YR:2",

"VPYAMUE:1",

"ZTMOUGV:1",

"7E4EKDA:1"

]

},

"local": {

"deleted": true,

"ignored": false,

"invalid": false,

"localFlags": 0,

"modified": "2017-09-05T14:25:19.0109486+02:00",

"modifiedBy": "VBHJ3YR",

"mustRescan": false,

"name": "Adobe/AdobeFlashPlayer/Adobe_Flash_Player_CleanUp.exe",

"noPermissions": false,

"numBlocks": 0,

"permissions": "0",

"sequence": 15319,

"size": 0,

"type": "FILE",

"version": [

"LRORKSI:1",

"VBHJ3YR:2",

"VPYAMUE:1",

"ZTMOUGV:1",

"7E4EKDA:1"

]

}

}

*** devC***

{

"availability": [

{

"id": "7E4EKDA-DEXQAEY-DIKXM2L-6XO7YWS-ARPGAW6-CRUSAI7-U2U5UMP-CCZKRAH",

"fromTemporary": false

}

],

"global": {

"deleted": true,

"ignored": false,

"invalid": false,

"localFlags": 0,

"modified": "2017-09-05T14:25:19.0109486+02:00",

"modifiedBy": "VBHJ3YR",

"mustRescan": false,

"name": "Adobe/AdobeFlashPlayer/Adobe_Flash_Player_CleanUp.exe",

"noPermissions": false,

"numBlocks": 0,

"permissions": "0",

"sequence": 15319,

"size": 0,

"type": "FILE",

"version": [

"LRORKSI:1",

"VBHJ3YR:2",

"VPYAMUE:1",

"ZTMOUGV:1",

"7E4EKDA:1"

]

},

"local": {

"deleted": true,

"ignored": false,

"invalid": false,

"localFlags": 0,

"modified": "2017-09-05T14:25:19.0109486+02:00",

"modifiedBy": "VBHJ3YR",

"mustRescan": false,

"name": "Adobe/AdobeFlashPlayer/Adobe_Flash_Player_CleanUp.exe",

"noPermissions": false,

"numBlocks": 0,

"permissions": "0",

"sequence": 31923,

"size": 0,

"type": "FILE",

"version": [

"LRORKSI:1",

"VBHJ3YR:2",

"VPYAMUE:1",

"ZTMOUGV:1",

"7E4EKDA:1"

]

}

}

- LRORKSI = ??? I don’t know what this is, maybe something old device that was removed ???

- VBHJ3YR = devA

- VPYAMUE = devC

- 7E4EKDA = devB