Hi all,

I have a Production machine with constant file changes and a fail-over machine that needs to be the most up-to-date possible.

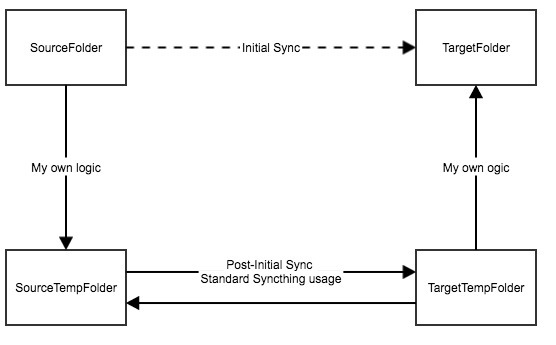

I need to use Syncthing in two different scenarios:

- Initial synchronization

- Post-Initial synchronization

The Initial synchronization scenario is where I need to do something similar to a backup/snapshot from the Production Machine (let’s call it SourceFolder) to the Fail-over machine (let’s call it TargetFolder). After completed, I need to disable it and switch to the Post-Initial synchronization phase.

The Post-Initial synchronization scenario is the standard use of Syncthing, synchronizing any changes between SourceTempFolder and TargetTempFolder.

Summary:

The problem is that since SourceFolder is getting constant file changes, Syncthing will probably never reach 100% sync, and consequently I cannot tell deterministically that I can switch to the Post-Initial synchronization scenario.

What I was trying:

- Oldest First

- Identify the most recently modified file (call it FileX) in SourceFolder

- Set SourceFolder as Master and synchronizing oldestFirst

- Monitor the TargetFolder to see when the FileX is created and them stop the sync

The problem is Syncthing transfers multiple files at the same time and detecting the FileX doesn’t mean that all older files are already there too. Other problem is that Syncthing creates .tmp files that will stay there forever after stopping the initial sync.

- rescanIntervalS

- Identify the most recently modified file (call it FileX) in SourceFolder

- Set SourceFolder as Master and setting rescanIntervalS to 0

- When adding the SourceFolder to Syncthing it will scan once (like a snapshot) and start synchronizing

- My logic can look only for changes that occurred after FileX modified date and copy files from SourceFolder to SourceTempFolder

During the synchronization files may be deleted/modified, and since rescanIntervalS is set to 0 will Syncthing have problems?

Do you guys have any suggestions?

Thank you in advance, Eduardo